B-Human - At a Glance¶

The purpose of this document is to provide a brief overview of the RoboCup team B-Human and its research and developments. The background of the team as well as the major software components are described, along with several links that provide deeper information on individual topics.

About RoboCup¶

RoboCup is an international research and education initiative. Its goal is to foster artificial intelligence and robotics research by providing a standard problem where a wide range of technologies can be examined and integrated. Currently, RoboCup competitions are held annually since 1997, including a world championship in the summer as well as several regional events. The RoboCup divides into several subdomains that feature different kinds of competitions. An overview is available on the Official RoboCup Website.

The oldest and probably most popular domain is RoboCupSoccer, which features multiple competitions for autonomous agents and robots that compete in football games, including simulated environments, wheeled robots, and large humanoid robots.

One of these football leagues is the Standard Platform League (SPL), in which the B-Human team is competing. In this league, all teams use the same robot platform, which they are not allowed to modify, and develop intelligent software that makes the robots play football autonomously. The current standard robot is the humanoid robot NAO. We give a detailed description of this platform in a later section of this document. The robots play in teams of seven against each other on a field that is about 75 square meters large. During a game, all robots have to act completely autonomously but can communicate with their teammates. No intervention by human team members is allowed. All details about the competition, including the rules and results of previous competitions, can be found on the SPL Homepage.

In addition to the main football games, multiple small extra competitions are held, such as the so-called Technical Challenges, in which robots have to solve ambitious tasks that might become part of future game rules, or competitions for mixed teams.

The B-Human Team¶

B-Human is a joint RoboCup team of the Universität Bremen's Computer Science department and the Cyber-Physical Systems department of the German Research Center for Artificial Intelligence (DFKI). The team was founded in 2006 as a team in the RoboCup's Humanoid League but switched to participating in the Standard Platform League in 2009. Since then, B-Human has won thirteen European competitions and has become RoboCup world champion nine times.

The number of team members varies from year to year and is always somewhere between 8 to 30. In addition to some researchers and still active alumni, the majority of the team members are students of the Universität Bremen. Most of them are participants of student projects, which are an integral part of the computer science curriculum. Furthermore, there are always students who write their Bachelor thesis or their Master thesis about a RoboCup-related topic. Some others love to program our robots in their spare time, without any academic credits.

Regular participation in RoboCup competitions is an expensive business. Thus, the B-Human team is glad to have multiple sponsors whose support allows us to cover a majority of our expenses for new robots and travels around the world. Our current main sponsor is CONTACT Software, one of the leading vendors of open solutions for product data management (PDM), product lifecycle management (PLM) and project management. Our other major sponsors are cellumation, JUST ADD AI, and the Alumni der Universität Bremen e.V..

Robot Hardware and Software Development¶

As all teams have to use the same robot platform, one could say that the SPL is a software competition, carried out on robots. This section provides a brief overview of the currently used robots as well as of B-Human's software development environment.

Robot Hardware¶

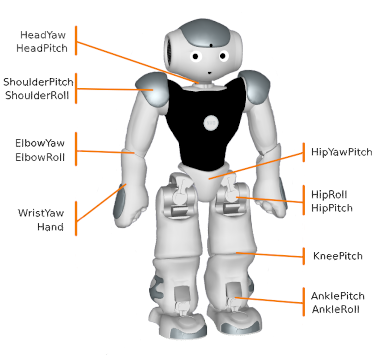

The Standard Platform League currently uses the NAO robots made by Aldebaran. The first version of this platform entered the pitch at RoboCup 2008. In the meantime, the NAO\(^\mathsf6\), released in 2018, has become the current state of the art. NAO's appearance has remained almost unchanged since the beginning: a 60 cm tall white humanoid robot. However, the internals have been upgraded multiple times.

The NAO\(^\mathsf6\) is the first version with a multi-core processor, thus allowing to run multiple threads in real parallelism. The NAO has an Ethernet and a USB port that enable transferring data quickly, but also a WiFi module for communication while the robot is in use.

NAO robots perceive their environment in many ways. Most important are the cameras, mounted above each other in the robot's head, one looking forward, the other one down to the floor in front of the robot's feet. Additionally, there are many other sensors: An inertial measurement unit (accelerometer and gyroscope), force sensitive resistors in the robot's feet, contact sensors on the head, the hands and the front of the feet, microphones, and a short-range sonar in the robot's chest (which is not used by B-Human).

The NAO robots have 26 joints controlled by 25 independent actuators. This is because one motor in the hip controls two joints at once. For each actuator, there is also a corresponding joint position sensor.

B-Human Software¶

Our software currently consists of about 150000 lines of code. The only programming language that we use for robot control software and simulation is C++, as it offers a combination of two important characteristics: a huge variety of modern programming language features together with the possibility to generate highly efficient code. Especially the latter is needed given the robot's limited computational resources and the highly dynamic football environment.

The software development does not occur directly on the robots but on the computers of the team members. It is currently possible to develop and test the robot software on all three major operating systems: Windows, macOS, and Linux. Only the final binary program gets deployed to the robots.

Some capabilities of our robots, such as the detection of other players, have been realized by applying machine learning techniques. However, the robots themselves do not learn, especially not during a game. The robots record data, such as camera images, that is used for the actual learning on an external, more powerful computer. The result of such a learning process is the configuration of a neural network that can later be executed by the robots. For training our neural networks, we currently mostly use the TensorFlow framework. To execute them efficiently on the robots, we implemented our own inference engine CompiledNN, which is optimized specifically for the CPU architecture of the NAO robots.

The usage of a standard robot platform has the benefit that sharing ideas and code with others becomes relatively easy, compared to domains in which every group builds its own robots. B-Human annually releases extensive parts of the own code in its public GitHub repository. Many teams from all over the world base their developments on our code. The B-Human team developed everything themselves, except for parts of our walking motion, which is based on the approach of the rUNSWift team.

Simulation and Debugging¶

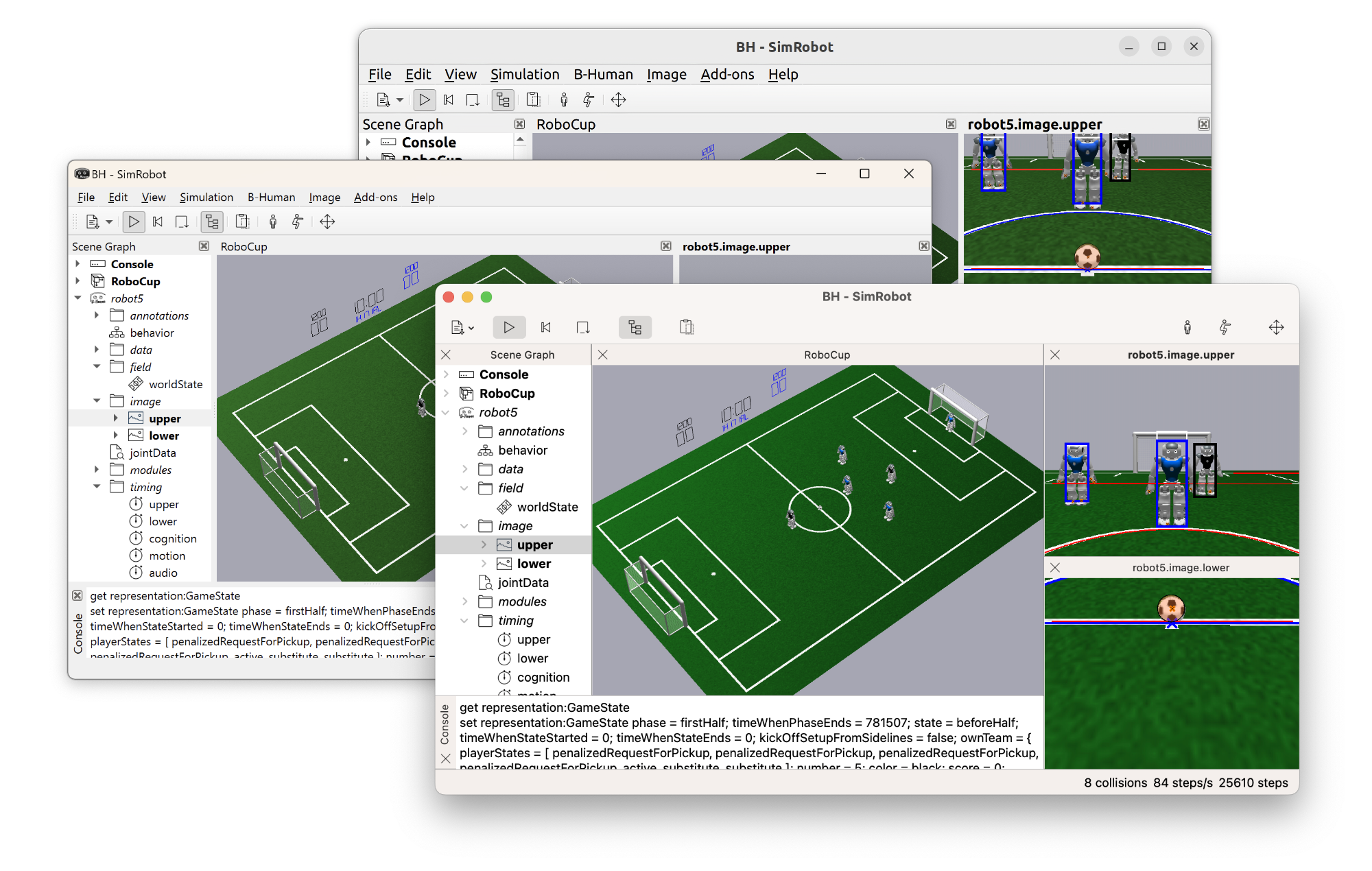

The major tool for development and debugging is the SimRobot application, which has been developed and maintained by our working group for many years.

Within the simulation environment, it is possible to play complete 7 vs. 7 robot football games and to run a separate instance of the full B-Human code for each robot. SimRobot is capable of simulating almost all sensors as well as all actuators of the current NAO robot. The popular Open Dynamics Engine subjects everything happening inside the simulation to physics and also handles collisions of objects.

Furthermore, SimRobot can connect to real robots and display sensor data and debugging information sent by a robot, as well as send commands for debugging purposes to a robot. Data recorded by a robot during a game can also be replayed and analyzed within SimRobot.

Software Architecture¶

A piece of software that allows a robot to perform a task as complex as playing football consists of an immense number of individual parts that have to solve all involved subtasks and need efficient and effective organization.

Threads¶

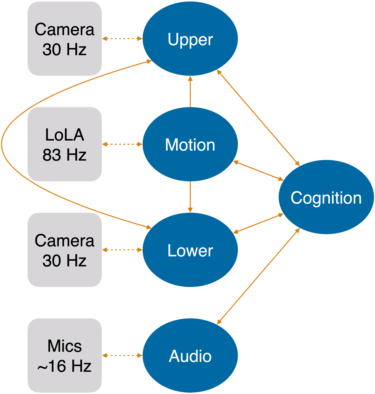

Robots are usually controlled through sense-think-act cycles. This means that measurements are received from the sensors, processed, and actions are derived based on these new measurements together with previous measurements and decisions. These actions are then executed. The NAO takes 30 images per second with each of its two cameras. All other measurements, such as the current positions of its joints, are received at a rate of 83 Hz. That is also the frequency at which the NAO accepts commands for its motors. Since all measurements should be immediately processed when they arrive, the processing is distributed over five main processing threads: Upper and Lower process the images from the respective cameras. Motion receives sensor measurements from and sends commands back to LoLA, NAO's low level control interface. Audio records data from NAO's microphones and processes them. Cognition receives its input from Upper, Lower, and Audio and sends high level commands to Motion.

Besides, there is a sixth thread Debug that functions as a gateway for transmitting debug data between all other threads and SimRobot.

Modules and Representations¶

The main concepts in the robot software are representations and modules. A representation defines a data structure that contains cohesive information of one processing task, for example, if and where the ball was seen in an image. In addition to the data, a representation may define how its content can be visualized in SimRobot. Of each representation, there is a single instance per thread shared by all modules.

A module, on the other hand, defines and implements how data is processed. First, a module specifies which representations and configuration parameters it requires as input and which representations it will provide as a result, typically only one. For each representation the module wants to alter, it implements an update method. Therein the new values for this representation are computed.

The update methods are called automatically by the framework. A dependency graph, built on the information each module provides about required and updated representations, determines the modules' execution order.

The framework also handles the exchange of representations that are provided and required in different threads transparently.

This setup allows students new to the project a smooth start into development as they only need to understand the code of the area they are working on.

In addition to modules and representations, some helper tools provide algorithms and data structures used in multiple modules. This includes geometry functions, including the module framework itself. These are either simple data structures used in many modules and representations (e.g. vectors and angles) or helper functions used by multiple modules.

Areas of Research¶

As previously mentioned, the whole robot software is divided into modules. These are grouped in a set of categories that reflect our different areas of research and development. In this section, a brief overview of the most important challenges and our current solutions is given.

Perception¶

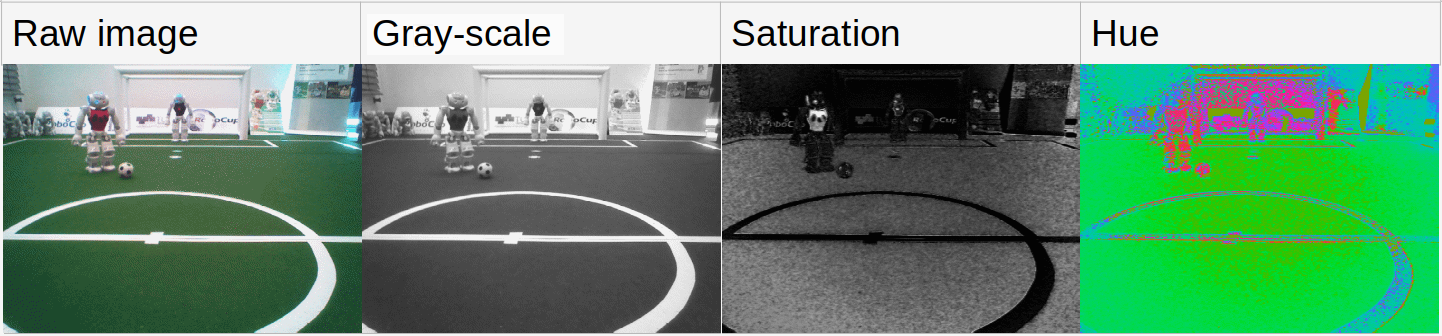

Perception is concerned with processing images taken by the cameras and detecting objects in them. B-Human currently perceives the ball, other robots, field lines, their crossings, penalty marks and the center circle. The first step in the overall process is to split the raw YCbCr image as delivered by the camera into flat grayscale, saturation and hue images. All the involved images can be visualized as seen below.

Further preprocessing steps are finding regions of the same color on scan lines (turning a 2D problem into a collection of 1D problems) and detecting the boundary between the field and the surroundings (as all other objects must be below that in the image). From then on, specialized modules per object category take over. The field line and center circle perceptors are completely based on the scan lines and the color classified image. Perceiving balls, penalty marks and line crossings consists of two stages, in which the first one identifies candidate spots and the second one classifies them using convolutional neural networks. The robot perception (at least on the upper camera) uses another CNN that operates on a raw downscaled grayscale image and directly predicts bounding boxes of robots.

Modeling¶

The task of the modeling component is to integrate noisy results from the perception into a consistent model of the objects in the environment over time. This model includes the position and velocity of the ball, the robot's own position and orientation on the field, and the positions and velocities of other robots. In this context, "noise" does not only mean that the measurements are imprecise, but that there may also be entirely wrong measurements (false positives) or no measurements at all (false negatives). Thus, all of the solutions follow the general principle of first associating measurements with object hypotheses that have been formed over time, potentially ruling out false positives in the process, and afterwards updating a probabilistic state estimator with the measurement per hypothesis. B-Human uses different kinds of combinations of Kalman Filters and particle filters for this task. This allows us to infer the values of not directly measurable states such as the robot's own position or the velocity of the ball.

Due to occlusion and a limited field of view and perception range, each robot on its own only has an incomplete model of the environment. Therefore, the robots exchange information via WiFi, which is used to construct a combined ball model and a model of all team members on the pitch.

Behavior Control¶

Based on the estimated world model and information received from its teammates, a robot has to decide which action it will carry out next. This process is organized into multiple layers. Topmost is the strategy component, which outputs a high-level command such as "shoot at the goal", "pass to some teammate" or "walk to a position". It handles selection of a tactic (e.g. defensive or offensive depending on the position of the ball), set plays such as kick-offs or free kicks, role assignment among teammates, and strategic positioning. The role assignment tries to ensure that always one player wants to play the ball, but given the sometimes inconsistent world models and restricted communication, this can fail. The other players choose a target position, either for potential passes or to defend shots on the own goal.

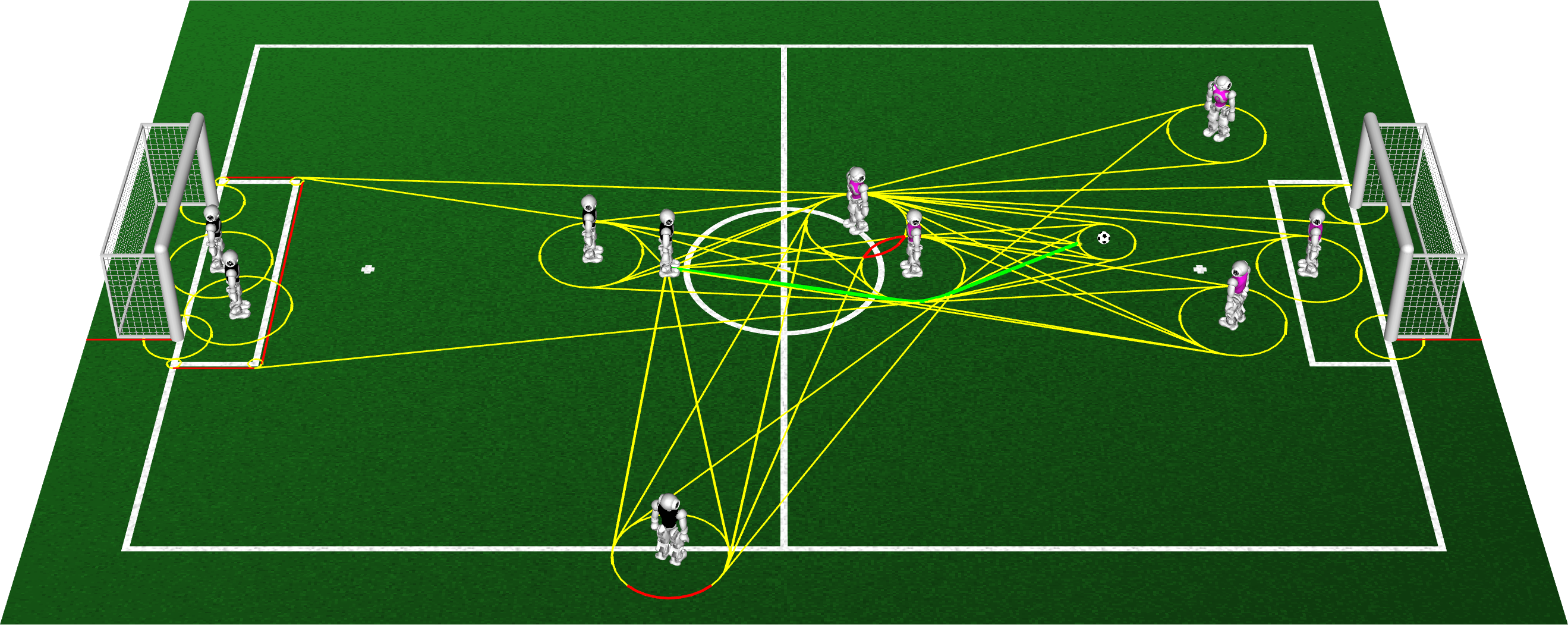

Below the strategy, there is the skill layer which interprets the strategy's command. It has some autonomy though, i.e. it can override that command if it cannot be executed or if other short-term reactions are necessary (the purpose of this is to reduce the number of cases to handle in high-level code). For instance, dueling with an opponent or catching the ball as a goalkeeper are handled on this layer and are not commanded by the strategy, but are initiated by the skill layer. Similarly, the skill layer avoids entering areas that are illegal as per the rules, e.g. the area around the ball when an opponent was given a free kick, or tries to leave them if already inside. If the high-level command is accepted, it is still the task of this module to fill in the details, i.e. for a shot command, the concrete kick type and angle on the goal have to be selected. Skills actually form a hierarchy where higher-level skills can call lower-level skills. The lowest level usually fills in a request for the motion engine (which runs in a different thread) and choosing a path to the desired target. For nearby destinations, this means merely reacting to obstacles and aligning to the target orientation. For destinations further away, we invoke a path planner that executes A* search on a 2D visibility graph to avoid getting stuck. The latter is visualized in the image below, in which the robot standing left of the center circle plans a way to the ball.

Some skills are implemented as state machines. For this, we used CABSL, an agent behavior specification language developed specifically for this purpose.

Proprioception¶

The proprioception processes the data from all the sensors except for the cameras. It computes the poses of the robot's limbs, its center of mass, its 3D orientation in the world and whether the robot is about to fall over. Some of the sensors also require filtering to suppress noise or to detect malfunction.

The most important sensors are the position sensors of the joints, the inertial measurement unit (IMU) and the force sensitive resistors (FSR) of the feet. Using the FSRs, the robot can determine which foot has more weight on it, which is important for our walking method. The accelerometer and gyroscope values from the IMU are filtered by an Unscented Kalman Filter to estimate the torso rotation of the robot. Combined with forward kinematics of the joint positions, a 3D transformation between a reference point on the ground and a fixed point between the hips of the robot is established. This is used, for instance, to transform the pixel coordinates of an object detected in a camera image to positions on the floor relative to the robot. In order to complement the visual robot perception, contacts of the foot bumpers and disturbances to the arm motions are detected and passed on to the obstacle modeling components.

Motion Control¶

Like the other tasks, motion control is divided into multiple modules: walking, kicking while standing, kicking while walking and getting up. While walking or standing, additional modules control the head and the arms on request of the behavior.

Bipedal robots bring the challenge of being unstable and falling over quite easily. We adapted the walking approach from the rUNSWift team. It is based on three major ideas: By alternately lifting up each leg, the robot naturally swings sideways without any actual hip movement, which allows the lifted leg to move over the ground. The instant the lifted foot returns to the ground is then measurable by the foot pressure sensors, which allows to synchronize the modeled walk cycle with the actual state, creating a kind of feedback loop. Lastly, to keep the robot upright, the gyroscope measurement around the pitch axis is lowpass filtered and directly added to the requested ankle pitch joint angle of the foot which is currently on the ground. The stability enhancements via adjusting step lengths are described in this paper.

Kicking with bipedal robots is difficult because the robots must support their weight on one foot for a longer period of time while also swinging the other foot without falling over. In our software, these kicks are described using Bezier curves and stabilized by simple balance controllers. Short and medium strong kicks can be executed while walking, while for stronger kicks, the robot must stand still to achieve more precise kicks. Getting up is challenging, too, as it should work on the first try but also be as fast as possible. These motions are currently keyframe-based. There are two problems which make getting up hard. Firstly, even the smallest bumps in the floor or pushes from other robots can make the robots fall over again while getting up, because the motions need to generate a precise and high momentum to move the torso above the feet again. Secondly, some leg joints tend to get stuck for a short period of time during this critical phase, which is the main reason for failed get up tries.

To keep the robots as well-functioning and safe as possible, they enter a protective pose as soon as falling down becomes inevitable. In addition, while just standing, their joints are adjusted to remain at a low temperature and current. These two approaches reduce the number of broken robots and overheated joints by a large margin.

Further Reading and Future Work¶

A detailed description of our work for RoboCup 2021 is given in our recent B-Human Team Report and Code Release 2021, which is part of our public GitHub repository that contains our released software. A manual for installing and using the B-Human software can be found here on this website.

A full list of our scientific contributions is part of the B-Human Homepage.

B-Human constantly works to improve all parts of the system and always adapts the software to the rules of the game that are updated yearly and make the whole scenario more challenging. Furthermore, B-Human always aims for successful contributions to all new technical challenges and other subcompetitions. Currently, the two major areas of research are perception and behavior: An increasing number of SPL games are played under natural lighting conditions, i.e. direct sunlight changes the appearance of objects on the pitch and makes them difficult to perceive. Thus, more robust approaches, often based on Deep Learning, are developed, until the whole robot vision can reliably cope with such conditions. The robots' behavior still requires more research on both ends: the individual robot skills, especially for precise and fast ball manipulation, as well as complex team coordination to allow plays involving sequences of passes.

To get the latest information about B-Human or to see some videos or images, one can visit our social media channels at:

Contact¶

Dr. Tim Laue, Universität Bremen, Homepage

Dr. Thomas Röfer, Deutsches Forschungszentrum für Künstliche Intelligenz, Homepage